AR/VR Integration in Live Sports Broadcasting

Why AirPixel is the ideal camera tracking solution for large scale live events and challenging lighting conditions

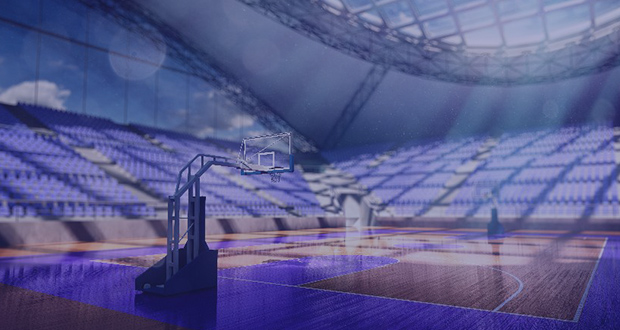

Augmented reality (AR) is shaping the way in which fans enjoy sports from the comfort of their home. In the same breath, it opens multiple possibilities for sports broadcasters to excite fans and enhance brand partnerships by overlaying computer-generated elements such as graphics and video to live game footage. To be able to do this, a system is needed that is able to combine live camera footage and virtual graphics in a dynamic way.

The virtual camera needs to be matched with the real camera. Position, orientation, focus, iris and zoom values from the real camera must be ingested into the virtual camera system while taking lens distortions into account, mapping the virtual imagery onto the real imagery. This is what a good camera tracking system can provide, when integrated into an existing live broadcast or virtual production workflow.

About AirPixel

AirPixel is a radio and inertial sensing technology that can be used to track various cameras (cable cams, Steadicams, crane mounted remote heads, remote PTZ cameras, drones) in very large areas, both indoors and outdoors. It can be used for AR and virtual production in large LED studios, stadiums, concert venues and racetracks. This has already been proven at the NCAA basketball men’s Final Four in April 2022 where TV-viewers were shown large billboards above the playing field displaying the team’s logos as well as ads during breaks.

Case study

When WarnerMedia (Turner Sports) wanted to add non-static AR content to the camera footage from SkyCam, they turned to AirPixel to track the SkyCam flying in an area of 300 x 300 ft at the Caesars Superdome in New Orleans, LA. Only 14 AirPixel beacons were mounted in the venue, and an AirPixel receiver was fixed to the bottom of the SkyCam. The FIZ data was taken by AirPixel from the Fuji lens mounted on a Sony camera, combined with the tracking data and sent to Pixotope taking care of the AR integration.

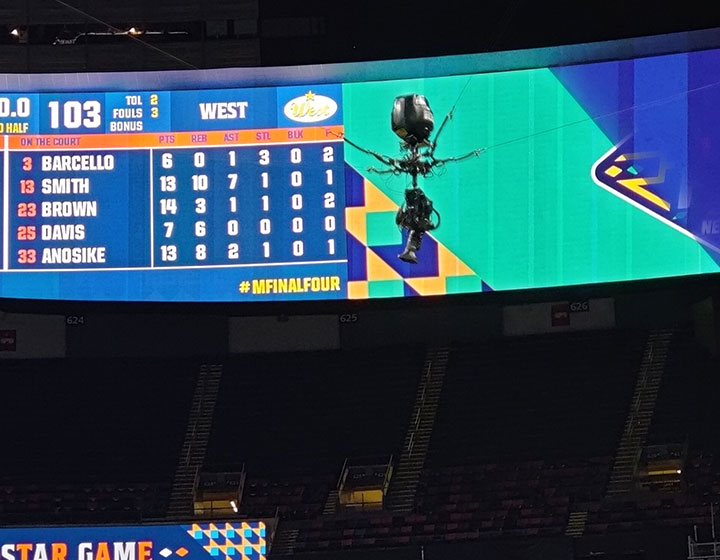

Virtual billboards showing the teams logo

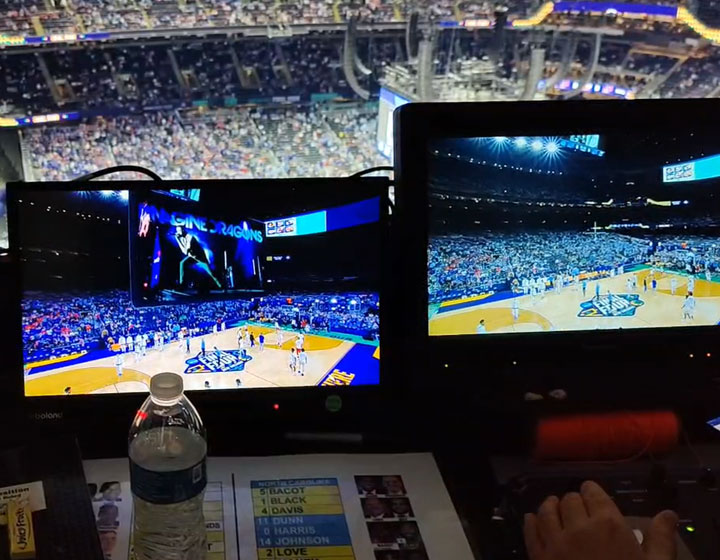

Composite image with virtual elements and raw camera feed side-by-side

Skycam being tracked by AirPixel flying above the playing field

AirPixel receiver mounted on the bottom of the SkyCam

Which sports broadcasting challenges does AirPixel help to address?

Wide-area tracking

Venues are usually large. Airpixel is able to track cameras in areas as small as 4x4m (12x12ft) to spaces as large as 100x100m (300x300ft) and wider, with the same hardware setup.

Variation in lighting and weather conditions

AirPixel is based on radio technology in combination with inertial sensors. Therefore, the system operates independent of lighting and weather conditions, both indoors and outdoors.

Non-permanent installations

Setting up an AirPixel system in a large venue takes hours instead of days. Beacons can be mounted on stand-alone tripods or attached to rigging structures such as trusses. There is no need to connect the beacons in a network and the beacons can run of a battery or individual power supply.

More advantages ...

Small on-camera footprint

AirPixel’s on-camera receiver consists of an antenna/sensor part and a separate processing unit. The antenna/sensor is mounted on the top or bottom of the camera. The processing unit can conveniently be placed elsewhere on the camera rig.

Reliable & robust

AirPixel’s technology originates form the automotive industry where reliability and robustness are key. The system is able to operate reliably over long time periods without the need for resetting or re-calibration. In case a beacon is moved or damaged, AirPixel can be easily re-calibrated.

Integration with existing AR broadcast pipelines

AirPixel can take genlocked FIZ data from lens and camera systems and feed that into an existing AR or virtual production platform such as Pixotope, disguise, Zero Density, Vizrt and Unreal Engine.

Contact AirPixel today and see how we can work together.

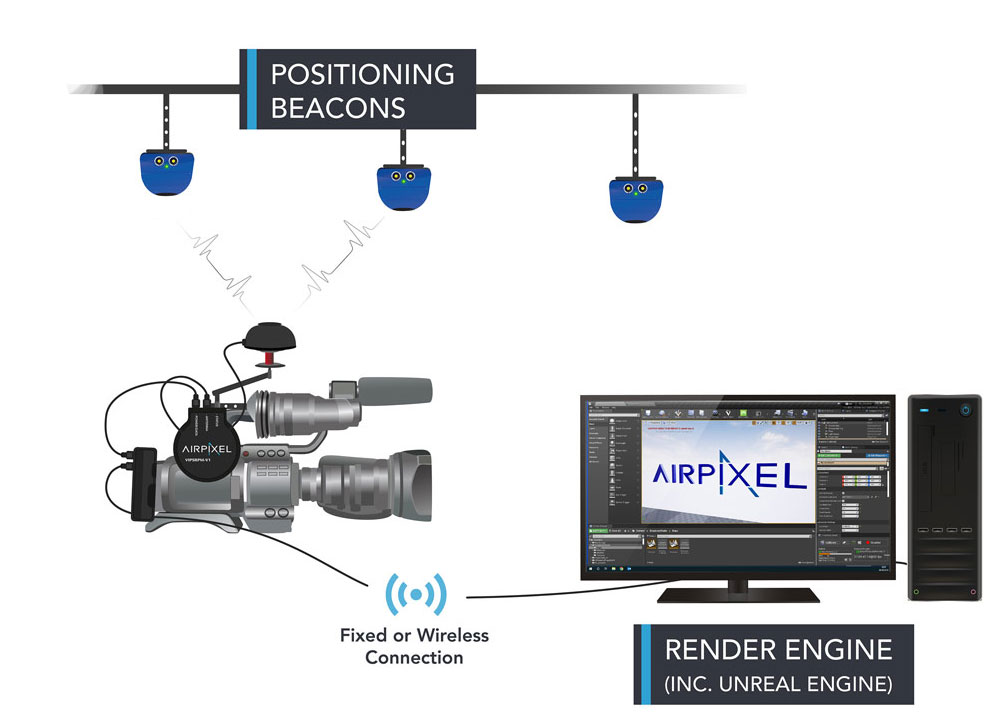

AirPixel works by utilising a collection of stand-alone beacons, which can be battery or permanently powered. Using ultra-wideband radio (UWB) they communicate with an on-camera receiver (rover). The rover calculates its position and orientation using the UWB data and an internal inertial measurement unit (IMU). This data is then processed through an advanced filter algorithm to give an accurate output of X, Y, Z, Pan, Tilt, and Roll.

The rover connects to a lightweight control unit which combines the position data with lens FIZ data, genlocks the output and transmits to the render engine via Ethernet or serial connection. Data is also formatted at this point in either AirPixel proprietary format ideal for use with our custom Live Link plugin, or FreeD format for extended software compatibility.

To set up the system, beacons are placed around the perimeter of the stage and/ or above the stage at varying heights and locations. The beacons are then surveyed using a high-speed robotic total station and bespoke in-house software. Using this method, it is possible to set the beacon locations with millimetre accuracy, and still complete setup in less than 2 hours for most configurations.

AirPixel works with many camera rigging systems, having been used on dolly, Steadicam, jibs, cranes and cable cams. It also works in any lighting conditions, including total darkness. In addition, we integrate with popular 3rd party products, to provide the best solution for your needs. This means that accurate data can often be provided on shots where traditionally tracking would be difficult or impossible.

Specifications

| Update rate | max. 100 Hz |

| Output rate Genlocked | 23.98, 24, 25, 29.97, 29.97 Drop, 30,47.95, 48, 50, 59.94, 59.94 Drop, 60 |

| Position accuracy | X: ±2 cm Y: ±2 cm Z: ±5 cm |

| Angular Accuracy | Tilt : ±0.2° Roll: ±0.2° Pan : ±0.5° |

| Latency | 50 ms |

| Positional resolution | 1 mm |

| Protocol support | AirPixel or FreeD |

| Max. tracking speed | 270 km/h; 75 metres per second |

| Max. number of receivers | 5 (per UWB channel) |

| Max. number of beacons | 200 (per UWB channel) |

| Max. coverage (assuming 30 m beacon spacing and square volume) |

390 m x 390 m 152,100 m2 1.6 million ft2 |

| UWB Channels | Channel 4 – 3993.6 MHz ±450 MHz Channel 7 – 6489.6 MHz ±450 MHz |

| UWB Transmit Power | -41.3 dBm/MHz |

| Rover dimensions | 7 x 4 cm |

| Beacon dimensions | 13 x 7.5 cm |

| Power requirements | 7 - 30 V DC, 100 mA |

| IP rating | Beacon: IP67 Rover: TBC |

| Operating Temperature | -20˚C to +60˚C |

| Storage Temperature | -40˚C to +85˚C |